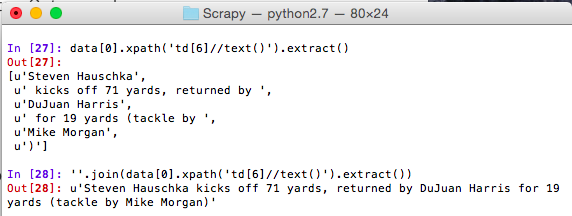

It seems that the title of the product is contained in a link tag without class.

Just enter wines = response.css('div.txt-wrap') and now we can call this variable in the next line.īecause we want to get the name of the product, we need to check where the name is being served again. To make our process more efficient, we’ll save this last response as a variable. It has returned all the HTML code within that first div that meets the criteria, and we can see the price, the name, and the link within that code. So to take it a bit further, let’s type this command again but adding the. Let’s use this class as a selector by typing response.css('div.txt-wrap') and it will return all the elements that match this class – which to be honest is really overwhelming and won’t be of much help.

Upon closer look, all the information we want to scrape is wrapped within with class="txt-wrap” across all product cards. Either way is fine.īy using the inspection tool in Chrome (ctrl + shift + c), we identify the classes or IDs we can use to select each element within the page. We can type view(response) and it will open the downloaded page on our default browser or just open our browser and navigate to our target page. Note: We can check this by typing response on our command line. It should return a 200 status, meaning that the website is working, and save it within the response variable.

This will allow us to download the HTML page we want to scrape and interrogate it to figure out what commands we want to use when writing our scraper script.Īfter the shell finishes loading, we’ll use the fetch command and enter the URL we want to download like so: fetch(''), and hit enter. To begin the test, let’s run scrapy shell and let it load. Loading Scrapy Shellįor this project, we’ll crawl to collect the product name, link, and selling price. This command will set up all the project files within a new directory automatically:īefore jumping into writing a spider, we first need to take a look at the website we want to scrape and find which element we can latch on to extract the data we want. On your command prompt, go to cd scrapy_tutorial and then type scrapy startproject scrapytutorial: Now that we’re inside our environment, we’ll use pip3 install scrapy to download the framework and install it within our virtual environment.Īnd that’s it. To activate your new environment, type scrapy_tutorial\scripts\activate.bat and run it. If you want to verify it was created, enter dir in your command prompt and it will list all the directories you have. The venv command will create a VE using the path you provided – in this case, scrapy_tutorial – and install the most recent version of Python you have in your system.Īdditionally, it will add a few directories inside with a copy of the Python interpreter, the standard library, and various supporting files. Open your command prompt on your desktop (or the directory where you want to create your virtual environment) and type python -m venv scrapy_tutorial. The Scrapy team recommends installing their framework in a virtual environment (VE) instead of system-wide, so that’s exactly what we’re going to do. Note: If you don’t feel comfortable going through this article without some knowledge of Python syntax, we recommend W3School’s python tutorial as a starting point. So you can be sure you’ll be able to follow each step of the process. Import your scraped data to a JSON or a CSV fileĪlthough it would be good to have some previous knowledge of how Python works, we’re writing this tutorial for complete beginners.In this Scrapy tutorial, you’ll learn how to: If you’ve ever wanted to build a web scraper but wondered how to get started with Scrapy, you’re in the right place. The beauty of this framework is how easy it is to build custom spiders at scale and collect specific elements using CSS or XPath selectors, manage files (JSON, CSV, etc.), and maintain our projects. It gives us all the tools needed to extract, process, and store data from any website. Scrapy is an open-source Python framework designed for web scraping at scale.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed